Using custom priors, likelihood, or movements in outbreaker2

Thibaut Jombart

2021-02-09

customisation.RmdIn this vignette, we show how custom functions for priors, likelihood, or movement of parameters and augmented data can be used in outbreaker2. In all these functions, the process will be similar:

- write your own function with the right arguments

- pass this function as an argument to a

custom...function - pass the result to outbreaker2

Note that 2-3 can be a single step if passing the function to the arguments of outbreaker2 directly. Also note that all priors and likelihoods are expected on a log scale. Finally, also note that while the various custom... functions will try to some extent to check that the provided functions are valid, such tests are very difficult to implement. In short: you are using these custom features at your own risks - make sure these functions work before passing them to outbreaker2.

Customising priors

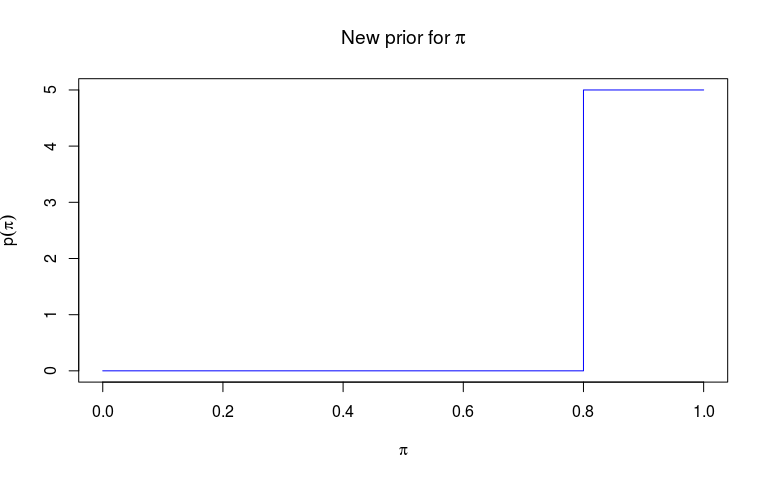

Priors of outbreaker2 must be a function of an outbreaker_param list (see ?outbreaker_param). Here, we decide to use a step function rather than the default Beta function as a prior for pi, the reporting probability, and a flat prior between 0 and 1 for the mutation rate (which is technically a probability in the basic genetic model used in outbreaker2).

We start by defining two functions: an auxiliary function f which returns values on the natural scale, and which we can use for plotting the prior distribution, and then a function f_pi which will be used for the customisation.

f <- function(pi) {

ifelse(pi < 0.8, 0, 5)

}

f_pi <- function(param) {

log(f(param$pi))

}

plot(f, type = "s", col = "blue",

xlab = expression(pi), ylab = expression(p(pi)),

main = expression(paste("New prior for ", pi)))

While f is a useful function to visualise the prior, f_pi is the function which will be passed to outbreaker. To do so, we pass it to custom_priors:

library(outbreaker2)

f_mu <- function(param) {

if (param$mu < 0 || param$mu > 1) {

return(-Inf)

} else {

return(0.0)

}

}

priors <- custom_priors(pi = f_pi, mu = f_mu)

priors

#>

#>

#> ///// outbreaker custom priors ///

#>

#> class: custom_priors list

#> number of items: 4

#>

#> /// custom priors set to NULL (default used) //

#> $eps

#> NULL

#>

#> $lambda

#> NULL

#>

#> /// custom priors //

#> $mu

#> function(param) {

#> if (param$mu < 0 || param$mu > 1) {

#> return(-Inf)

#> } else {

#> return(0.0)

#> }

#>

#> }

#>

#> $pi

#> function(param) {

#> log(f(param$pi))

#> }Note that custom_priors does more than just adding the custom function to a list. For instance, the following customisations are all wrong, and rightfully rejected:

## wrong: not a function

## should be pi = function(x){0.0}

custom_priors(pi = 0.0)

#> Error in custom_priors(pi = 0): The following priors are not functions: pi

## wrong: two arguments

custom_priors(pi = function(x, y){0.0})

#> Error in custom_priors(pi = function(x, y) {: The following priors dont' have a single argument: piWe can now use the new priors to run outbreaker on the fake_outbreak data (see introduction vignette):

dna <- fake_outbreak$dna

dates <- fake_outbreak$sample

w <- fake_outbreak$w

data <- outbreaker_data(dna = dna, dates = dates, w_dens = w)

## we set the seed to ensure results won't change

set.seed(1)

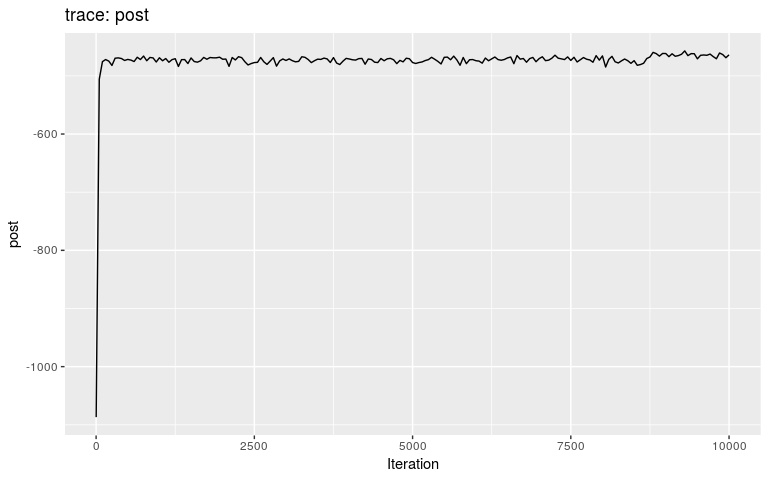

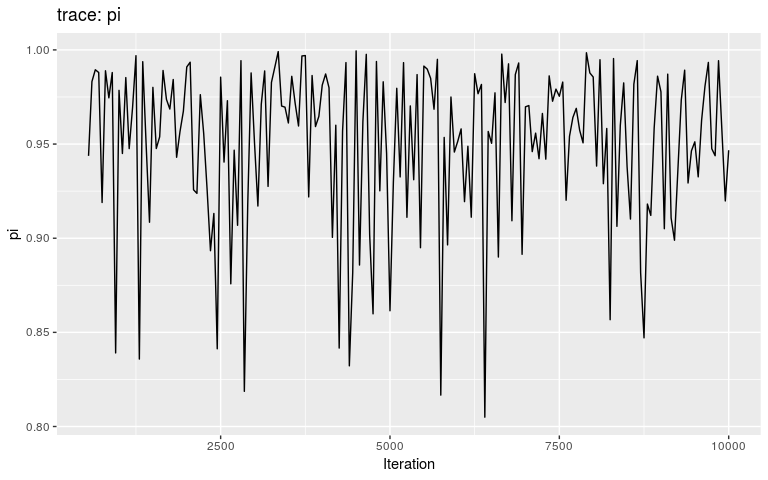

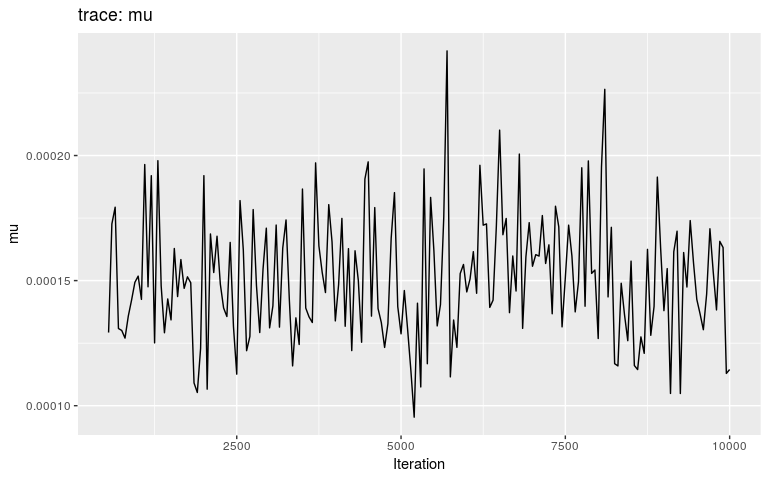

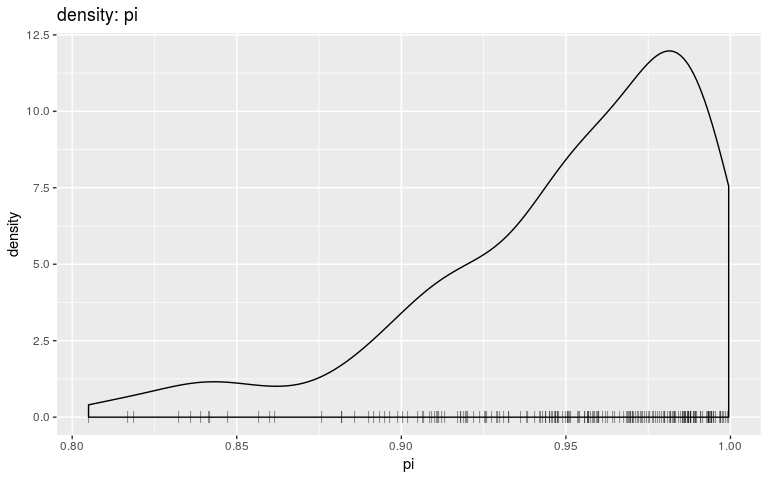

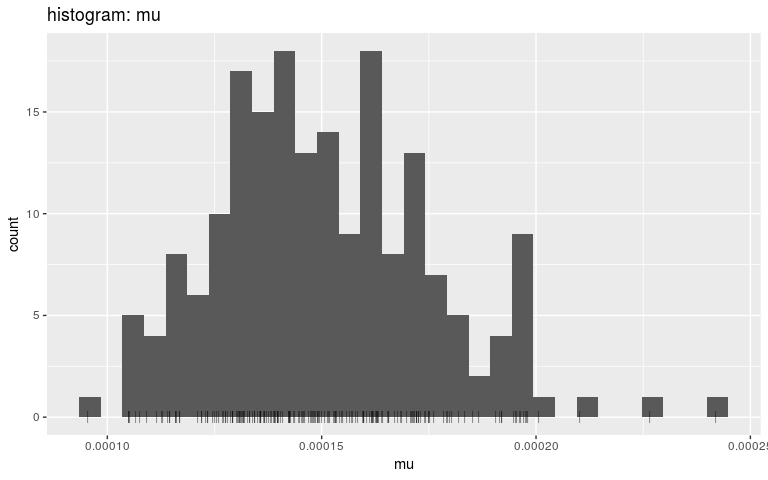

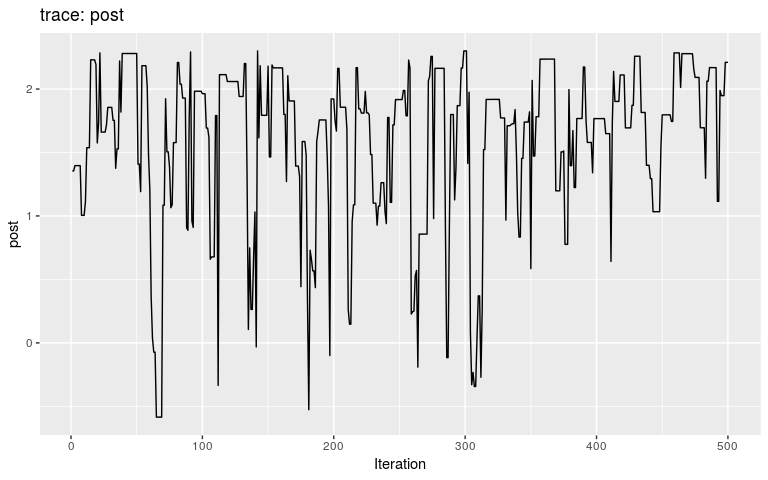

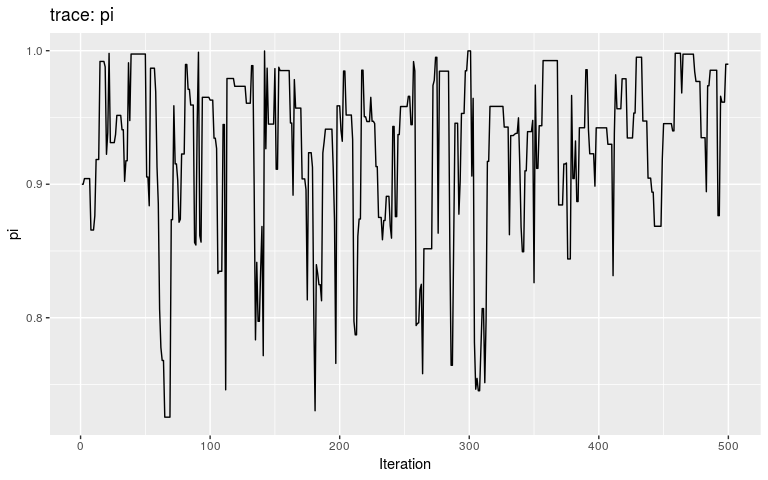

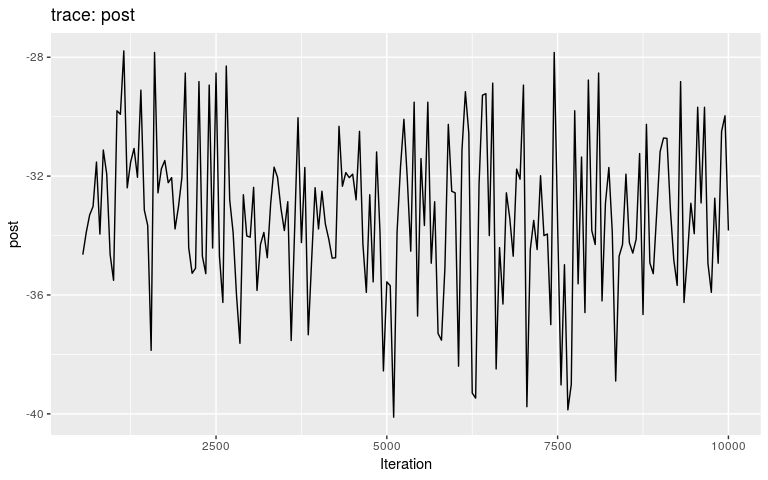

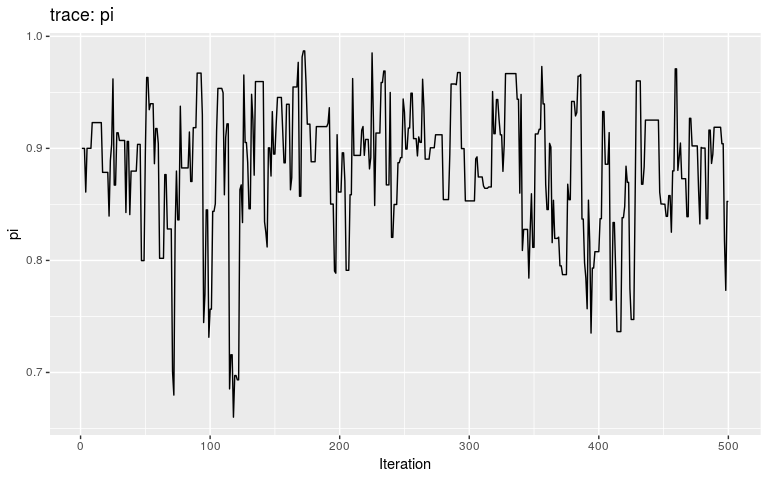

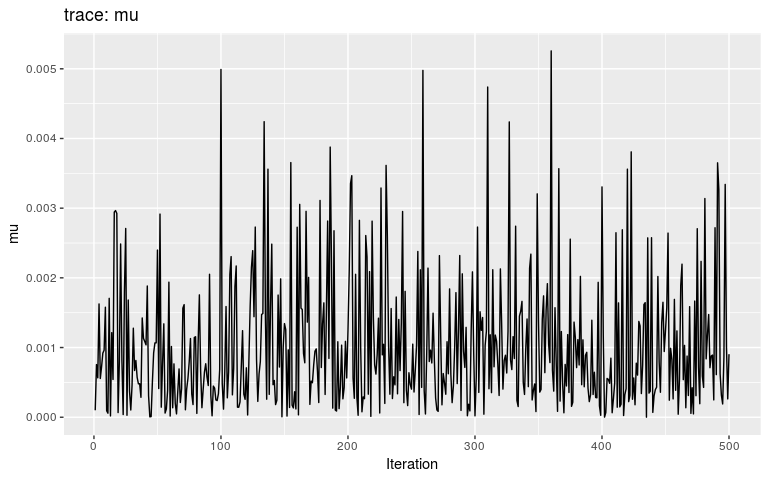

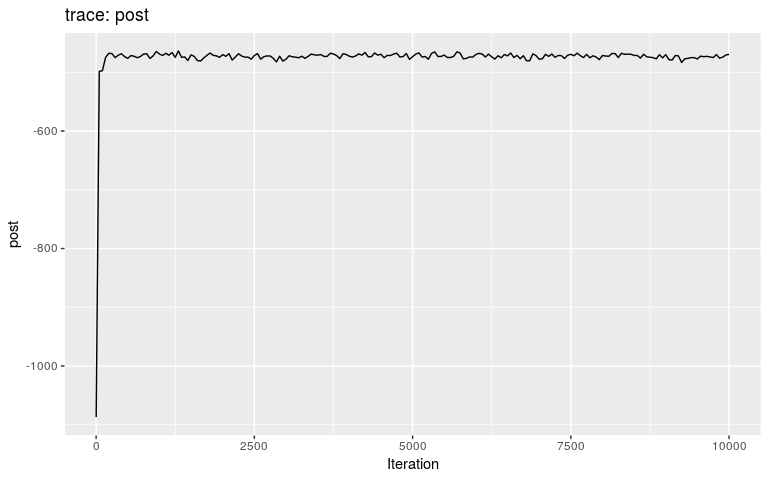

res <- outbreaker(data = data, priors = priors)We can check the results first by looking at the traces, and then by plotting the posterior distributions of pi and mu, respectively:

plot(res)

plot(res, "pi", burnin = 500)

plot(res, "mu", burnin = 500)

plot(res, "pi", type = "density", burnin = 500)

plot(res, "mu", type = "hist", burnin = 500)

#> `stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

Note that we are using density and histograms here for illustrative purposes, but there is no reason to prefer one or the other for a specific parameter.

Interestingly, the trace of pi suggests that the MCMC oscillates between two different states, on either bound of the interval on which the prior is positive (it is -Inf outside (0.8; 1)). This may be a consequence of the step function, which causes sharp ‘cliffs’ in the posterior landscape. What shall one do to derive good samples from the posterior distribution in this kind of situation? There are several options, which in fact apply to typical cases of multi-modal posterior distributions:

Avoid ‘cliffs’, i.e. sharp drops in the posterior landscape, typically created by using step-functions in likelihoods and in priors.

Use larger samples, i.e. run more MCMC iterations.

Use a different sampler, better than Metropolis-Hasting at deriving samples from multi-modal distributions.

Because we know what the real transmission tree is for this dataset, we can assess how the new priors impacted the inference of the transmission tree.

summary(res, burnin = 500)

#> $step

#> first last interval n_steps

#> 550 10000 50 190

#>

#> $post

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -481.1 -467.5 -463.9 -464.7 -461.4 -456.7

#>

#> $like

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -482.7 -469.1 -465.5 -466.3 -463.0 -458.3

#>

#> $prior

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 1.609 1.609 1.609 1.609 1.609 1.609

#>

#> $mu

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 8.215e-05 1.254e-04 1.400e-04 1.419e-04 1.566e-04 2.159e-04

#>

#> $pi

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.8031 0.9207 0.9556 0.9453 0.9813 0.9999

#>

#> $tree

#> from to time support generations

#> 1 NA 1 -1 NA NA

#> 2 1 2 1 1.000000000 1

#> 3 2 3 3 1.000000000 1

#> 4 NA 4 3 0.005263158 NA

#> 5 3 5 4 0.994736842 1

#> 6 9 6 6 1.000000000 1

#> 7 4 7 5 0.994736842 1

#> 8 5 8 6 0.989473684 1

#> 9 4 9 5 0.968421053 1

#> 10 6 10 8 1.000000000 1

#> 11 7 11 7 0.689473684 1

#> 12 5 12 7 0.826315789 1

#> 13 9 13 7 1.000000000 1

#> 14 5 14 7 0.768421053 1

#> 15 5 15 7 0.752631579 1

#> 16 7 16 8 0.794736842 1

#> 17 7 17 7 0.626315789 1

#> 18 8 18 9 0.431578947 1

#> 19 9 19 8 1.000000000 1

#> 20 10 20 10 0.968421053 1

#> 21 11 21 10 0.973684211 1

#> 22 11 22 10 1.000000000 1

#> 23 13 23 9 1.000000000 1

#> 24 13 24 9 1.000000000 1

#> 25 13 25 9 1.000000000 1

#> 26 17 26 9 1.000000000 1

#> 27 17 27 10 1.000000000 1

#> 28 NA 28 9 NA NA

#> 29 10 29 11 1.000000000 1

#> 30 13 30 10 1.000000000 1

tree <- summary(res, burnin = 500)$tree

comparison <- data.frame(case = 1:30,

inferred = paste(tree$from),

true = paste(fake_outbreak$ances),

stringsAsFactors = FALSE)

comparison$correct <- comparison$inferred == comparison$true

comparison

#> case inferred true correct

#> 1 1 NA NA TRUE

#> 2 2 1 1 TRUE

#> 3 3 2 2 TRUE

#> 4 4 NA NA TRUE

#> 5 5 3 3 TRUE

#> 6 6 9 4 FALSE

#> 7 7 4 4 TRUE

#> 8 8 5 5 TRUE

#> 9 9 4 6 FALSE

#> 10 10 6 6 TRUE

#> 11 11 7 7 TRUE

#> 12 12 5 8 FALSE

#> 13 13 9 9 TRUE

#> 14 14 5 5 TRUE

#> 15 15 5 5 TRUE

#> 16 16 7 7 TRUE

#> 17 17 7 7 TRUE

#> 18 18 8 8 TRUE

#> 19 19 9 9 TRUE

#> 20 20 10 10 TRUE

#> 21 21 11 11 TRUE

#> 22 22 11 11 TRUE

#> 23 23 13 13 TRUE

#> 24 24 13 13 TRUE

#> 25 25 13 13 TRUE

#> 26 26 17 17 TRUE

#> 27 27 17 17 TRUE

#> 28 28 NA NA TRUE

#> 29 29 10 10 TRUE

#> 30 30 13 13 TRUE

mean(comparison$correct)

#> [1] 0.9Customizing likelihood

Likelihood functions customisation works identically to prior functions. The only difference is that custom functions will take two arguments (data and param) instead of one in the prior functions. The function used to specify custom likelihood is custom_likelihoods. Each custom function will correspond to a specific likelihood component:

custom_likelihoods()

#>

#>

#> ///// outbreaker custom likelihoods ///

#>

#> class: custom_likelihoods list

#> number of items: 5

#>

#> /// custom likelihoods //

#> $genetic

#> $genetic[[1]]

#> NULL

#>

#> $genetic[[2]]

#> [1] 0

#>

#>

#> $reporting

#> $reporting[[1]]

#> NULL

#>

#> $reporting[[2]]

#> [1] 0

#>

#>

#> $timing_infections

#> $timing_infections[[1]]

#> NULL

#>

#> $timing_infections[[2]]

#> [1] 0

#>

#>

#> $timing_sampling

#> $timing_sampling[[1]]

#> NULL

#>

#> $timing_sampling[[2]]

#> [1] 0

#>

#>

#> $contact

#> $contact[[1]]

#> NULL

#>

#> $contact[[2]]

#> [1] 0see ?custom_likelihoods for details of these components, and see the section ‘Extending the model’ for new, other components. As for custom_priors, a few checks are performed by custom_likelihoods:

## wrong: not a function

custom_likelihoods(genetic = "fubar")

#> Error in custom_likelihoods(genetic = "fubar"): The following likelihoods are not functions: genetic

## wrong: only one argument

custom_likelihoods(genetic = function(x){ 0.0 })

#> Error in custom_likelihoods(genetic = function(x) {: The following likelihoods do not have arity two or three: geneticA trivial customisation is to disable some or all of the likelihood components of the model by returning a finite constant. Here, we apply this to two cases: first, we will disable all likelihood components as a sanity check, making sure that the transmission tree landscape is explored freely by the MCMC. Second, we will recreate the Wallinga & Teunis (1994) model, by disabling specific components.

A null model

f_null <- function(data, param) {

return(0.0)

}

null_model <- custom_likelihoods(genetic = f_null,

timing_sampling = f_null,

timing_infections = f_null,

reporting = f_null,

contact = f_null)

null_model

#>

#>

#> ///// outbreaker custom likelihoods ///

#>

#> class: custom_likelihoods list

#> number of items: 5

#>

#> /// custom likelihoods //

#> $genetic

#> $genetic[[1]]

#> function(data, param) {

#> return(0.0)

#> }

#>

#> $genetic[[2]]

#> [1] 2

#>

#>

#> $reporting

#> $reporting[[1]]

#> function(data, param) {

#> return(0.0)

#> }

#>

#> $reporting[[2]]

#> [1] 2

#>

#>

#> $timing_infections

#> $timing_infections[[1]]

#> function(data, param) {

#> return(0.0)

#> }

#>

#> $timing_infections[[2]]

#> [1] 2

#>

#>

#> $timing_sampling

#> $timing_sampling[[1]]

#> function(data, param) {

#> return(0.0)

#> }

#>

#> $timing_sampling[[2]]

#> [1] 2

#>

#>

#> $contact

#> $contact[[1]]

#> function(data, param) {

#> return(0.0)

#> }

#>

#> $contact[[2]]

#> [1] 2We also specify settings via the config argument to avoid detecting imported cases, reduce the number of iterations and sampling each of them:

null_config <- list(find_import = FALSE,

n_iter = 500,

sample_every = 1)

set.seed(1)

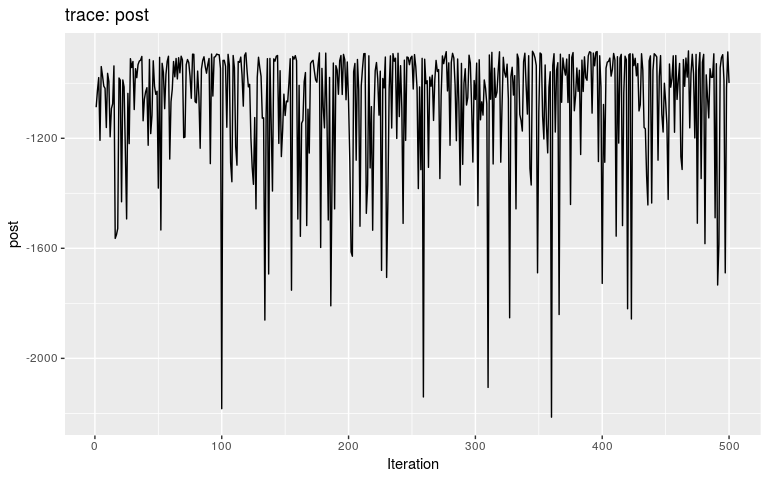

res_null <- outbreaker(data = data,

config = null_config,

likelihoods = null_model)

plot(res_null)

plot(res_null, "pi")

plot(res_null, "mu")

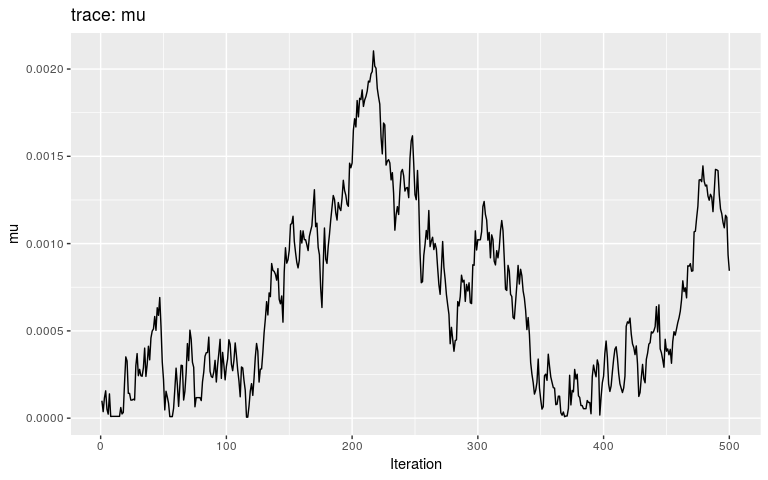

By typical MCMC standards, these traces look appaling, as they haven’t reach stationarity (i.e. same mean and variance over time), and are grossly autocorrelated in parts. Fair enough, as these are only the first 500 iterations of the MCMC, so that autocorrelation is expected. In fact, what we observe here literally is the random walk across the posterior landscape, which in this case is only impacted by the priors.

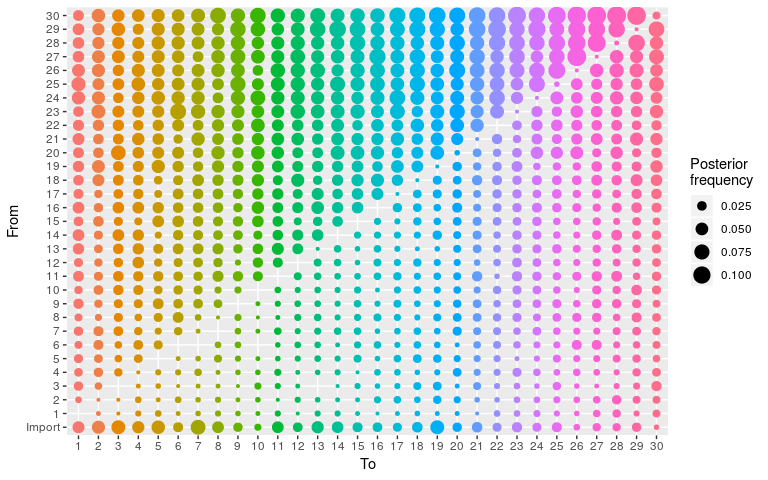

We can check that transmission trees are indeed freely explored:

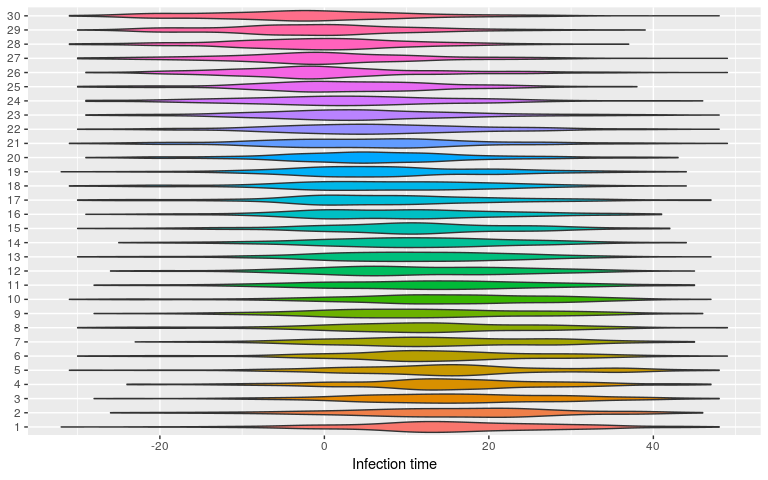

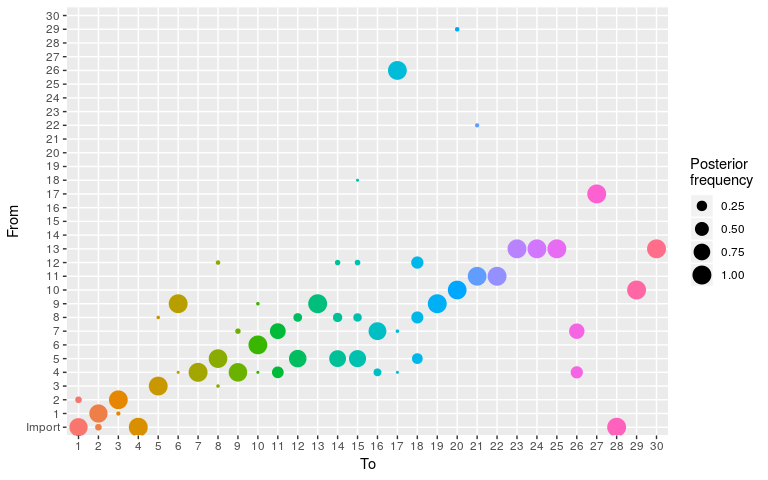

plot(res_null, type = "alpha")

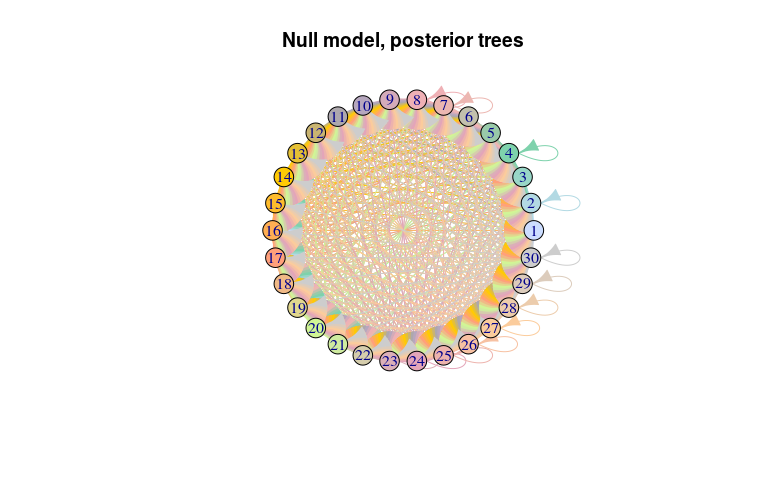

Do not try to render the corresponding network using plot(..., type = "network") as the force-direction algorithm will go insane. However, this network can be visualised using igraph, extracting the edges and nodes from the plot (without displaying it):

## extract nodes and edges from the visNetwork object

temp <- plot(res_null, type = "network", min_support = 0)

class(temp)

#> [1] "visNetwork" "htmlwidget"

head(temp$x$edges)

#> from to value arrows color

#> 1 1 1 0.004 to #CCDDFF

#> 2 1 2 0.026 to #CCDDFF

#> 3 1 3 0.036 to #CCDDFF

#> 4 1 4 0.040 to #CCDDFF

#> 5 1 5 0.020 to #CCDDFF

#> 6 1 6 0.042 to #CCDDFF

head(temp$x$nodes)

#> id label value color shape shaped

#> 1 1 1 0.916 #CCDDFF dot star

#> 2 2 2 0.940 #B2D9E3 dot <NA>

#> 3 3 3 0.984 #98D6C7 dot <NA>

#> 4 4 4 0.986 #7ED2AC dot <NA>

#> 5 5 5 0.900 #99CAA9 dot <NA>

#> 6 6 6 1.066 #C2C0AD dot <NA>

## make an igraph object

library(igraph)

#>

#> Attaching package: 'igraph'

#> The following objects are masked from 'package:stats':

#>

#> decompose, spectrum

#> The following object is masked from 'package:base':

#>

#> union

net_null <- graph.data.frame(temp$x$edges,

vertices = temp$x$nodes[1:4])

plot(net_null, layout = layout.circle,

main = "Null model, posterior trees")

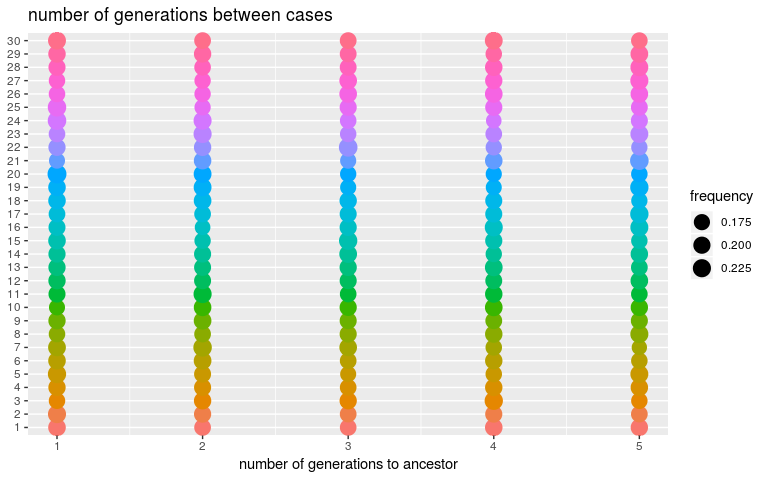

We can derive similar diagnostics for the number of generations betweens cases (kappa), only constrained by default settings to be between 1 and 5, and for the infection dates (t_inf):

plot(res_null, type = "kappa")

plot(res_null, type = "t_inf")

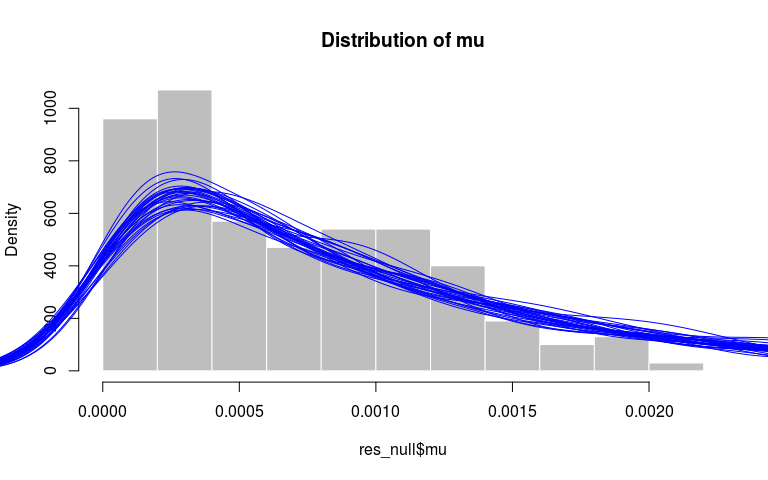

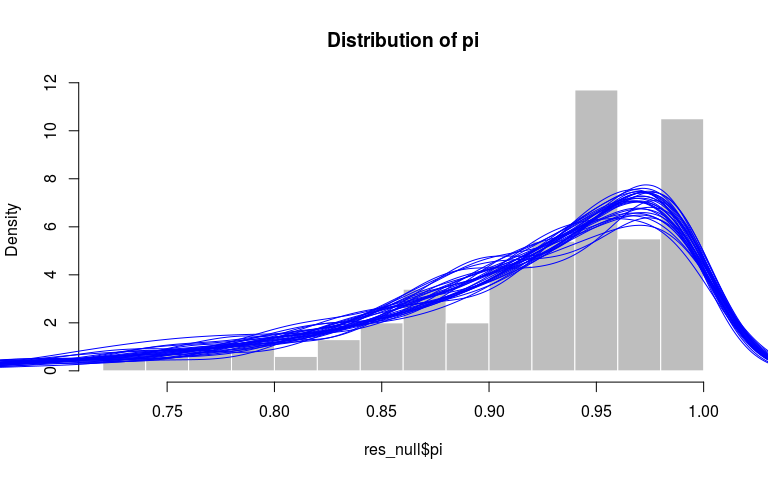

Finally, we can verify that the distributions of mu and pi match their priors, respectively an exponential distribution with rate 1000 and a beta with parameters 10 and 1. Here, we get a qualitative assessment by comparing the observed distribution (histograms) to the densities of similar sized random samples from the priors:

par(xpd=TRUE)

hist(res_null$mu, prob = TRUE, col = "grey",

border = "white",

main = "Distribution of mu")

invisible(replicate(30,

points(density(rexp(500, 1000)), type = "l", col = "blue")))

hist(res_null$pi, prob = TRUE, col = "grey",

border = "white", main = "Distribution of pi")

invisible(replicate(30,

points(density(rbeta(500, 10, 1)), type = "l", col = "blue")))

A model using symptom onset only

We can use data and likelihood customisation to change the default outbreaker2 model into a Wallinga & Teunis (1994) model. To do so, we need to:

Remove the DNA sequences from the data; alternatively we could also specify a ‘null’ function (i.e. returning a finite constant, as above) for the genetic likelihood.

Disable all likelihood components other than

timing_infectionsusingcustom_likelihoods. This means that the dates provided will be treated as dates of symptom onset, and the timing distributionwwill be taken as the serial interval.-

Disable the detection of imported cases, and forcing all

kappavalues to be

While these are fairly major changes, they are straightforward to implement. We first create the dataset and custom likelihood functions:

onset_data <- outbreaker_data(dates = fake_outbreak$onset,

w_dens = fake_outbreak$w)

wt_model <- custom_likelihoods(timing_sampling = f_null,

reporting = f_null)To fix parameters or augmented data (here, fix all kappa values to 1), we set the initial states to the desired values and disable the corresponding moves:

wt_config <- create_config(init_kappa = 1, move_kappa = FALSE,

init_pi = 1, move_pi = FALSE,

move_mu = FALSE)We can now run the analyses for this new model:

set.seed(1)

res_wt <- outbreaker(data = onset_data,

config = wt_config,

likelihoods = wt_model)

#> Can't use seqTrack initialization with missing DNA sequences; using a star-like tree

plot(res_wt)

plot(res_wt, burnin = 500)

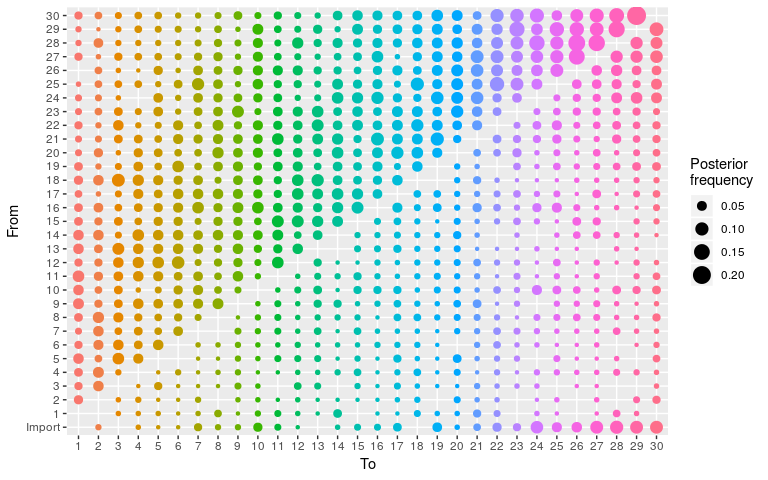

plot(res_wt, burnin = 500, type = "alpha")

summary(res_wt)

#> $step

#> first last interval n_steps

#> 1 10000 50 201

#>

#> $post

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -354.93 -35.56 -33.78 -35.23 -31.48 -26.79

#>

#> $like

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -357.23 -37.86 -36.08 -37.53 -33.78 -29.10

#>

#> $prior

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 2.302 2.302 2.302 2.302 2.302 2.302

#>

#> $mu

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 1e-04 1e-04 1e-04 1e-04 1e-04 1e-04

#>

#> $pi

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 1 1 1 1 1 1

#>

#> $tree

#> from to time support generations

#> 1 4 1 1 0.05472637 1

#> 2 NA 2 1 0.05472637 NA

#> 3 25 3 1 0.06467662 1

#> 4 21 4 0 0.07462687 1

#> 5 26 5 0 0.08457711 1

#> 6 19 6 -1 0.06965174 1

#> 7 15 7 1 0.06467662 1

#> 8 11 8 0 0.06467662 1

#> 9 15 9 -1 0.04975124 1

#> 10 12 10 -1 0.05472637 1

#> 11 16 11 0 0.05970149 1

#> 12 2 12 0 0.07462687 1

#> 13 24 13 0 0.05970149 1

#> 14 10 14 1 0.06467662 1

#> 15 9 15 1 0.07462687 1

#> 16 22 16 0 0.05472637 1

#> 17 2 17 0 0.05472637 1

#> 18 1 18 1 0.05472637 1

#> 19 24 19 0 0.06965174 1

#> 20 28 20 0 0.05970149 1

#> 21 12 21 -1 0.05472637 1

#> 22 10 22 -1 0.05970149 1

#> 23 5 23 1 0.06965174 1

#> 24 11 24 0 0.05970149 1

#> 25 10 25 0 0.05472637 1

#> 26 22 26 2 0.07462687 1

#> 27 7 27 0 0.05970149 1

#> 28 17 28 2 0.05970149 1

#> 29 2 29 0 0.06965174 1

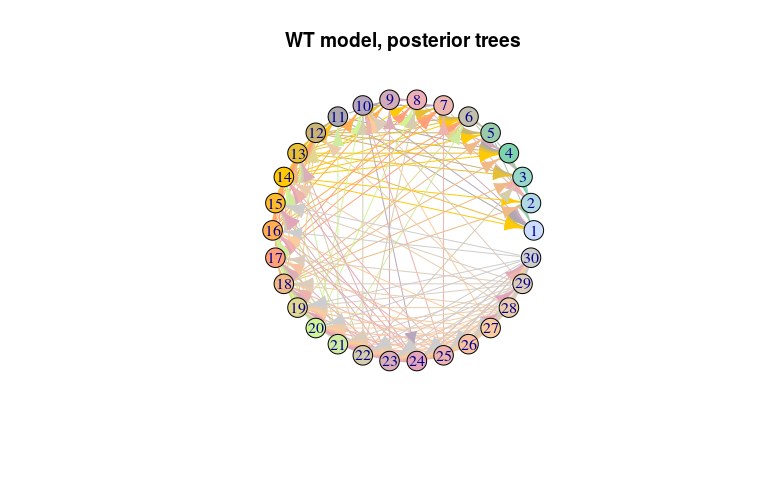

#> 30 22 30 2 0.07462687 1As before for the ‘null’ model, the transmission tree is very poorly resolved in this case. We use the same approach to visualise it: extract nodes and edges from the visNetork object, use this information to create an igraph object, and visualise the result using a circular layout:

## extract nodes and edges from the visNetwork object

temp <- plot(res_wt, type = "network", min_support = 0.05)

class(temp)

#> [1] "visNetwork" "htmlwidget"

head(temp$x$edges)

#> from to value arrows color

#> 10 1 11 0.05472637 to #CCDDFF

#> 17 1 18 0.05472637 to #CCDDFF

#> 23 1 24 0.05472637 to #CCDDFF

#> 32 2 4 0.06965174 to #B2D9E3

#> 39 2 12 0.07462687 to #B2D9E3

#> 42 2 15 0.05970149 to #B2D9E3

head(temp$x$nodes)

#> id label value color shape shaped

#> 1 1 1 0.9751244 #CCDDFF dot <NA>

#> 2 2 2 1.0696517 #B2D9E3 dot star

#> 3 3 3 0.9402985 #98D6C7 dot <NA>

#> 4 4 4 1.0845771 #7ED2AC dot <NA>

#> 5 5 5 0.9353234 #99CAA9 dot <NA>

#> 6 6 6 0.9303483 #C2C0AD dot <NA>

## make an igraph object

net_wt <- graph.data.frame(temp$x$edges,

vertices = temp$x$nodes[1:4])

plot(net_wt, layout = layout.circle,

main = "WT model, posterior trees")

Customising movements

Customising movements works in similar ways to priors and likelihoods. In practice, this type of customisation is more complex as it most likely will require evaluation of likelihoods and priors, and therefore require the user to know which functions to all, and how. These are documented in the API vignette. In the following, we provide two examples:

a (fake) Gibbs sampler for the movement of the mutation rate

mua new ‘naive’ movement of ancestries in which infectors are picked at random from all cases

But before getting into these, we need to explicit how movements are happening in outbreaker2.

Movements in outbreaker2

At the core of the outbreaker function, movements are implemented as a list of functions, which are all evaluated in turn during every iteration of the MCMC. All movement functions must obey two rules:

The first argument must be an

outbreaker_paramobject (typically calledparamin the original code); see?create_paramfor details.All movement functions must return a valid,

outbreaker_paramobject.

However, a new difficulty compared to prior or likelihood customisation is that different movements may require different components of the model, and a different set of arguments after param. In fact, this can be seen by examining the arguments of all the default movement functions:

lapply(custom_moves(), args)

#> $mu

#> function (param, data, config, custom_ll = NULL, custom_prior = NULL)

#> NULL

#>

#> $pi

#> function (param, data, config, custom_ll = NULL, custom_prior = NULL)

#> NULL

#>

#> $eps

#> function (param, data, config, custom_ll = NULL, custom_prior = NULL)

#> NULL

#>

#> $lambda

#> function (param, data, config, custom_ll = NULL, custom_prior = NULL)

#> NULL

#>

#> $alpha

#> function (param, data, list_custom_ll = NULL)

#> NULL

#>

#> $swap_cases

#> function (param, data, list_custom_ll = NULL)

#> NULL

#>

#> $t_inf

#> function (param, data, list_custom_ll = NULL)

#> NULL

#>

#> $kappa

#> function (param, data, config, list_custom_ll = NULL)

#> NULLTo handle this difficulty, outbreaker2 transforms every movement function before running the MCMC into a new function with a single parameter param, attaching all other required argument to the function’s environment. The function achieving this transformation is called bind_moves. This function ‘knows’ what these components are for known moves listed aboves. For new, unknown moves, it attaches the following components, provided they are used as arguments of the new function:

data: the processed data; see?outbreaker_dataconfig: the configuration list; seecreate_configlikelihoods: a list of custom likelihood functions; see?custom_likelihoodspriors: a list of custom prior functions; see?custom_priors

See examples in ?bind_moves for details of how this process works.

A (fake) Gibbs sampler for mu

A Gibbs sampler supposes that the conditional distribution of a parameter is known and can directly be sampled from. Here, we use this principle to provide a toy example of custom movement for mu, assuming that this conditional distribution is always an Exponential distribution with a rate of 1000. This is easy to implement; to make sure that the function is actually used, we set a global variable changed when the function is called.

move_mu <- function(param, config) {

NEW_MOVE_HAS_BEEN_USED <<- TRUE

param$mu <- rexp(1, 1000)

return(param)

}

moves <- custom_moves(mu = move_mu)

quick_config <- list(n_iter = 500, sample_every = 1, find_import = FALSE)Note that the new movement function move_mu has two arguments, and that we do not specify config. Internally, what happens is:

## bind quick_config to function

move_mu_intern <- bind_to_function(move_mu, config = quick_config)

## new function has just one argument

move_mu_intern

#> function (param)

#> {

#> NEW_MOVE_HAS_BEEN_USED <<- TRUE

#> param$mu <- rexp(1, 1000)

#> return(param)

#> }

#> <environment: 0x0000000022775760>

## 'config' is in the function's environment

names(environment(move_mu_intern))

#> [1] "config"

## 'config' is actually 'quick_config'

identical(environment(move_mu_intern)$config, quick_config)

#> [1] TRUEWe perform a quick run using this new movement:

NEW_MOVE_HAS_BEEN_USED <- FALSE

set.seed(1)

res_move_mu <- outbreaker(data, quick_config, moves = moves)

NEW_MOVE_HAS_BEEN_USED

#> [1] TRUE

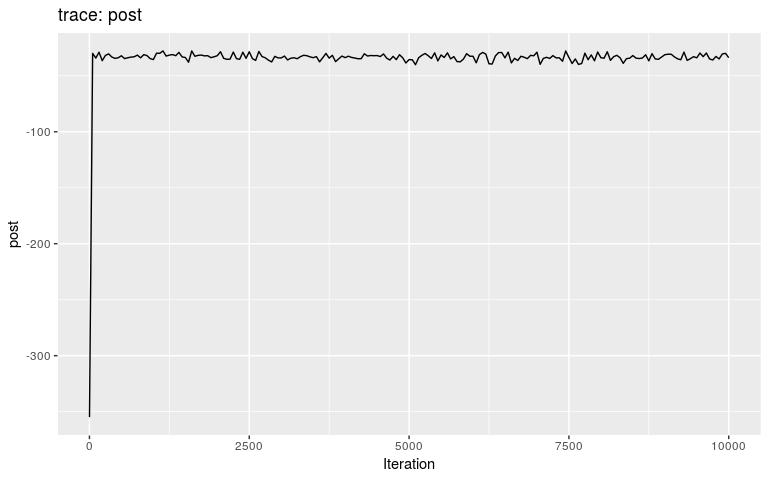

plot(res_move_mu)

plot(res_move_mu, "pi")

plot(res_move_mu, "mu")

This short, full trace, clearly hasn’t mixed well (as is to be expected). But while we see the effect of accept/reject sampling (Metropolis algorithm) for pi with a lot of autocorrelation, the trace of mu shows complete independence between successive values. While the Gibbs sampler used here is not correct, this result is: a Gibbs sampler will be more efficient than the classical Metropolis(-Hasting) algorithm for a given number a iterations.

A new movement of ancestries

Moves of ancestries are done in two ways in outbreaker: by picking ancestors at random from any prior case, and by swapping cases from a transmission link. Here, we implement a new move, which will propose infectors which have been infected on the same day of the current infector. As before, we will use global variables to keep track of the resulting movements (see N_ACCEPT and N_REJECT).

api <- get_cpp_api()

new_move_ances <- function(param, data, custom_likelihoods = NULL) {

for (i in 1:data$N) {

current_ances <- param$alpha[i]

if (!is.na(current_ances)) {

## find cases infected on the same days

current_t_inf <- param$t_inf[current_ances]

pool <- which(param$t_inf == current_t_inf)

pool <- setdiff(pool, i)

if (length(pool) > 0) {

## propose new ancestor

current_ll <- api$cpp_ll_all(data, param, i = i, custom_likelihoods)

param$alpha[i] <- sample(pool, 1)

new_ll <- api$cpp_ll_all(data, param, i = i, custom_likelihoods)

## likelihood ratio - no correction, move is symmetric

ratio <- exp(new_ll - current_ll)

## accept / reject

if (runif(1) <= ratio) { # accept

N_ACCEPT <<- N_ACCEPT + 1

} else { # reject

N_REJECT <<- N_REJECT + 1

param$alpha[i] <- current_ances

}

}

}

}

return(param)

}

moves <- custom_moves(new_move = new_move_ances)We can now use this new move in our transmission tree reconstruction. We will use a shorter chain than the defaults as this new move is likely to be computer intensive.

N_ACCEPT <- 0

N_REJECT <- 0

set.seed(1)

res_new_move <- outbreaker(data, list(move_kappa = FALSE), moves = moves)

N_ACCEPT

#> [1] 150958

N_REJECT

#> [1] 263986

plot(res_new_move)

plot(res_new_move, type = "alpha")

summary(res_new_move)

#> $step

#> first last interval n_steps

#> 1 10000 50 201

#>

#> $post

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -1087.1 -447.3 -444.8 -448.5 -443.0 -436.8

#>

#> $like

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -1088.4 -449.5 -446.9 -450.6 -445.2 -439.0

#>

#> $prior

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -0.01622 1.99783 2.14793 2.05799 2.23686 2.30230

#>

#> $mu

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 8.573e-05 1.282e-04 1.421e-04 1.453e-04 1.619e-04 2.329e-04

#>

#> $pi

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.7729 0.9667 0.9830 0.9737 0.9927 1.0000

#>

#> $tree

#> from to time support generations

#> 1 NA 1 -1 0.004975124 NA

#> 2 1 2 1 0.995024876 1

#> 3 2 3 3 0.995024876 1

#> 4 NA 4 3 0.014925373 NA

#> 5 3 5 4 0.985074627 1

#> 6 4 6 5 0.965174129 1

#> 7 4 7 5 0.985074627 1

#> 8 5 8 6 0.960199005 1

#> 9 13 9 7 0.995024876 1

#> 10 6 10 7 0.995024876 1

#> 11 7 11 7 0.681592040 1

#> 12 5 12 7 0.850746269 1

#> 13 6 13 6 0.995024876 1

#> 14 5 14 7 0.761194030 1

#> 15 5 15 7 0.800995025 1

#> 16 7 16 8 0.805970149 1

#> 17 7 17 7 0.626865672 1

#> 18 8 18 9 0.467661692 1

#> 19 9 19 9 1.000000000 1

#> 20 10 20 10 0.985074627 1

#> 21 11 21 10 0.970149254 1

#> 22 11 22 10 1.000000000 1

#> 23 13 23 9 1.000000000 1

#> 24 13 24 9 1.000000000 1

#> 25 13 25 9 1.000000000 1

#> 26 17 26 9 0.990049751 1

#> 27 17 27 10 1.000000000 1

#> 28 NA 28 9 NA NA

#> 29 10 29 10 1.000000000 1

#> 30 13 30 10 1.000000000 1Results show a switch to a new mode at about 5000 iterations. Let us compare the consensus tree to the actual one (store in fake_outbreak$ances):

summary(res_new_move, burnin = 5000)

#> $step

#> first last interval n_steps

#> 5050 10000 50 100

#>

#> $post

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -452.5 -447.0 -444.5 -445.0 -443.0 -437.9

#>

#> $like

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> -453.9 -449.2 -446.5 -447.1 -445.2 -440.2

#>

#> $prior

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 1.212 2.020 2.170 2.086 2.238 2.302

#>

#> $mu

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.0001006 0.0001281 0.0001416 0.0001450 0.0001620 0.0002308

#>

#> $pi

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.8859 0.9691 0.9854 0.9765 0.9929 1.0000

#>

#> $tree

#> from to time support generations

#> 1 NA 1 -1 NA NA

#> 2 1 2 1 1.00 1

#> 3 2 3 3 0.99 1

#> 4 NA 4 3 0.01 NA

#> 5 3 5 4 0.99 1

#> 6 4 6 5 0.96 1

#> 7 4 7 5 0.99 1

#> 8 5 8 6 0.95 1

#> 9 13 9 7 1.00 1

#> 10 6 10 7 1.00 1

#> 11 7 11 7 0.70 1

#> 12 5 12 7 0.88 1

#> 13 6 13 6 1.00 1

#> 14 5 14 7 0.79 1

#> 15 5 15 7 0.81 1

#> 16 7 16 8 0.85 1

#> 17 7 17 7 0.68 1

#> 18 8 18 9 0.47 1

#> 19 9 19 9 1.00 1

#> 20 10 20 10 0.97 1

#> 21 11 21 10 0.98 1

#> 22 11 22 10 1.00 1

#> 23 13 23 9 1.00 1

#> 24 13 24 9 1.00 1

#> 25 13 25 8 1.00 1

#> 26 17 26 9 1.00 1

#> 27 17 27 10 1.00 1

#> 28 NA 28 9 NA NA

#> 29 10 29 10 1.00 1

#> 30 13 30 10 1.00 1

tree2 <- summary(res_new_move, burnin = 5000)$tree

comparison <- data.frame(case = 1:30,

inferred = paste(tree2$from),

true = paste(fake_outbreak$ances),

stringsAsFactors = FALSE)

comparison$correct <- comparison$inferred == comparison$true

comparison

#> case inferred true correct

#> 1 1 NA NA TRUE

#> 2 2 1 1 TRUE

#> 3 3 2 2 TRUE

#> 4 4 NA NA TRUE

#> 5 5 3 3 TRUE

#> 6 6 4 4 TRUE

#> 7 7 4 4 TRUE

#> 8 8 5 5 TRUE

#> 9 9 13 6 FALSE

#> 10 10 6 6 TRUE

#> 11 11 7 7 TRUE

#> 12 12 5 8 FALSE

#> 13 13 6 9 FALSE

#> 14 14 5 5 TRUE

#> 15 15 5 5 TRUE

#> 16 16 7 7 TRUE

#> 17 17 7 7 TRUE

#> 18 18 8 8 TRUE

#> 19 19 9 9 TRUE

#> 20 20 10 10 TRUE

#> 21 21 11 11 TRUE

#> 22 22 11 11 TRUE

#> 23 23 13 13 TRUE

#> 24 24 13 13 TRUE

#> 25 25 13 13 TRUE

#> 26 26 17 17 TRUE

#> 27 27 17 17 TRUE

#> 28 28 NA NA TRUE

#> 29 29 10 10 TRUE

#> 30 30 13 13 TRUE

mean(comparison$correct)

#> [1] 0.9